How Many Column Families Does Titan Create on Hbase

In previous Hbase tutorials we looked at how to install Hbase and develop suitable information models. In this tutorial we volition build on those concepts to demonstrate how to perform create read update delete (CRUD) operations using the Hbase beat out. If you have not installed Hbase delight refer to Hbase base tutorial to larn how to install and configure Hbase. For a review of information models please refer to acquire how to create effective data models in Hbase.

The shell can be used in interactive or non interactive fashion. To pass commands to Hbase in non interactive mode from an operating system shell you use the repeat command and the | operator and pass the non interactive choice -n. it is important to note this style of running commands can be tedious. For instance to create a table you utilise the command below

repeat "create 'courses', 'id' " | hbase shell -north

Some other mode of running Hbase in non interactive mode is by creating a text file with each control on its ain line and so specifying the path to the text file. For case if we create a text file and save it in /usr/local/createtables.txt nosotros run the commands as shown beneath. This will be demonstrated later.

hbase shell /usr/local/createtables.txt

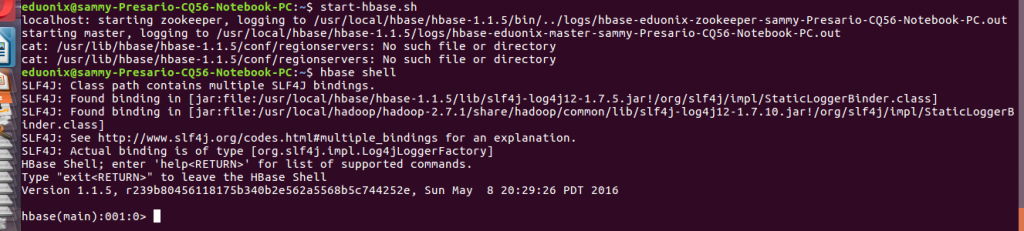

To access the Hbase shell in an interactive style start Hbase using this command starting time-hbase.sh then invoke the shell using this command hbase shell command at a terminal.

Before nosotros demonstrate the use of Hbase shell there are several of import points to be aware of when typing commands in the trounce. These are highlighted below

- Names identifying tables and columns need to be quoted

- There is no demand to quote constants

- Command parameters are separated using commas

- To run a command after typing information technology in the shell striking enter key

- Double quoting is required when you need to use binary keys or values in the crush

- To separate keys and values y'all apply the => graphic symbol

- To specify a fundamental you utilize predefined constants like Proper noun, VERSIONS and COMPRESSIONS

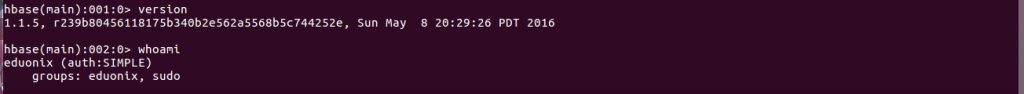

Nosotros will begin with general commands for Hbase. To check the version you are running you utilize the version control. To place the user who is running Hbase you employ whoami control. To know the condition of the cluster you lot use the status control. Options that tin can be used with condition control are summary, detailed or simple. The default option is summary. To asking detailed cluster condition you would utilize the command shown below. The status control is but useful if you are running a Hbase cluster otherwise when running a single instance it is not useful.

status 'detailed'

In its simplest form the create command is used to create a table by specifying the table name and cavalcade family. To reduce deejay space used for storing data it is advisable to apply brusk column family names. This is because storage of each value happens in a fully qualified mode. Frequent change of column names and utilise of many column families is not skilful practice so this is a design area that needs conscientious thought. A compromise blueprint is to take a few column families then you can take many columns in each family. The format of naming columns is to specify the column family then the column name (family:qualifier).

A basic command that creates a table with 2 column families is shown beneath.

CREATE 'courses' 'hadoop' 'programming'

To add columns in each column family unit the query is enhanced as shown beneath.

CREATE 'courses' 'hadoop:spark', 'programming:java'

To optimize data storage Hbase offers several options to assistance in managing data storage. Compression enables a reduction in amount of data stored on disk and data sent over the network but increases CPU workload. Three algorithms bachelor for compression are Snappy, LZO and GZIP. GZIP compresses data better than the other two algorithms merely its CPU requirements are college compared to the other two. GZIP is a better choice when compressing infrequently queried data while the other 2 are more appropriate for data that is frequently queried. The previous query is enhanced as shown below to enable information pinch.

CREATE 'courses' 'hadoop:spark', 'programming:java', Pinch >= Snappy

Hbase allows you to have multiple versions of a row. This arises because data changes are not applied in place, instead a alter results in a new version. To control how this happens you lot specify the number of versions or time to live (TTL). When any of these settings are exceeded rows are removed when data compaction is done. Examples are shown below.

CREATE courses hadoop:spark, programming:coffee, VERSIONS >= four;

To ameliorate scalability Hbase offers a way to separate tables into smaller units. These tabular array splits are referred to as regions. At the starting time there is 1 tabular array but as more rows are added the demand to dissever the table arises. When creating a table you supply points that will be used to decide how data will exist divide. This process is referred to as pre-splitting. This pre-splitting enables you to even load distribution across a cluster and it is an excellent pick when you take prior knowledge of the distribution fundamental. However a bad decision will not distribute load evenly resulting in poor cluster performance. There are no tested rules to guide you in choosing number of regions. All-time practice is to begin with a number that is a depression multiple of the number of region servers and so get out Hbase to do automatic splitting. A RegionSplitter utility is bachelor in Hbase to aid in deciding split points. HexStringSplit and UniformSplit are two algorithms that can aid you deciding splitting but you can also use custom algorithms.

The utility can be used to split a table past specifying number of regions and cavalcade families to be used as shown below. The statement below creates a table named split_table with 10 regions on the hadoop cavalcade family.

hbase org.apache.hadoop.hbase.util.RegionSplitter split_table HexStringSplit -c ten -f hadoop

The regions can likewise exist created when creating the table if you have split points equally shown below.

create 'split_table', 'hadoop', SPLITS >= ['A' 'B' 'C']

In this beginning part on using Hbase beat out nosotros confined ourselves to discussing the use of CREATE command. Options for creating tables that perform optimally were discussed. Data compression, tabular array splitting and row versioning were discussed. In subsequent tutorials nosotros look at actual use of the shell, loading and manipulating data

Source: https://blog.eduonix.com/bigdata-and-hadoop/learn-how-to-use-hbase-shell-to-create-tables-query-data-update-and-delete-data/

0 Response to "How Many Column Families Does Titan Create on Hbase"

Post a Comment